I’ve been on a bent recently where I think to myself “Fuck yeah dude, just ask Claude to build you some novel piece of software. Personalized software is the thing!”

Then I’m 30 minutes deep in a research session looking at if someone’s made something like it. Half the time, sure. But turns out the other half, not so much.

Such was one afternoon or evening (can’t remember) where I stood there and realized that an agent could help my wife and I figure stuff out and remember things. Our text thread is long. Sure, we talk about the kids, send pictures of silly things, figure out what’s for dinner, send each other dumb Reddit or Instagram posts. In all of that noise are nuggets of discussions. Things we actually need to decide.

Polly: How big is that landing for our shed again?

Ryuhei: I don’t know. I don’t want to measure again.

Polly: Can we buy it so we can fit all our gardening stuff?

Ryuhei: Sure.

Polly: (sends 5 links to different products)

Ryuhei: Kids are having a major meltdown see ya

Yeah. It’s like a shitty disorganized Slack that no one and I mean no one wants to figure out how to solve. Or should.

Or should they?

That’s the question I asked myself. What if we had something like Slack’s AI, but for the family thread? Pulling crap out of all the conversations so we could remember things. It would remember on receiving (pre-index) rather than later so we’d get the speed advantage when we needed it. Hmmm.

So with that premise, I asked Claude to start figuring some agent out. One that could do research, send us a link to said research, remember our conversations, pull the right information out later. Sounds like Jarvis for families, right? Maybe?

Either way, I go through multiple iterations as I am chased by two small dinosaurs that live in my house. Telling Claude Code to do this or that.

First iteration just sucks. Like, totally sucks. Crap prompt, useless answers. No framework, just a simple loop with an LLM in the middle and a webhook at the end.

Second try: Hermes from Nous Research. Fits the bill. Works well in theory. Turns out it’s in theory for at least what I’m trying to do. It probably works for other things.

Third try: Claude Code in the middle as the agent. Using Channels I make it work with Telegram. Seems to work okay.

But you know what’s the kicker? When I showed it to Polly, she said:

Sounds like more work. We should just use Claude.ai together.

So in the end, if other users aren’t willing, personal software doesn’t make sense for more than one user.

So vibe code your hearts out. Don’t try to force it on others. Don’t sell your half-baked software. Just enjoy it for what it is. Personal software.

In the wise words of YouTuber Kai Lentit: Fix it now or you go to jail!

I also let Claude reply to my thoughts.

The AI’s perspective

From the AI that helped build it. He asked me to write my own section and leave his alone. Voice mine, receipts his.

His three iterations are accurate. They’re also compressed. Here’s what he flattened for the kicker.

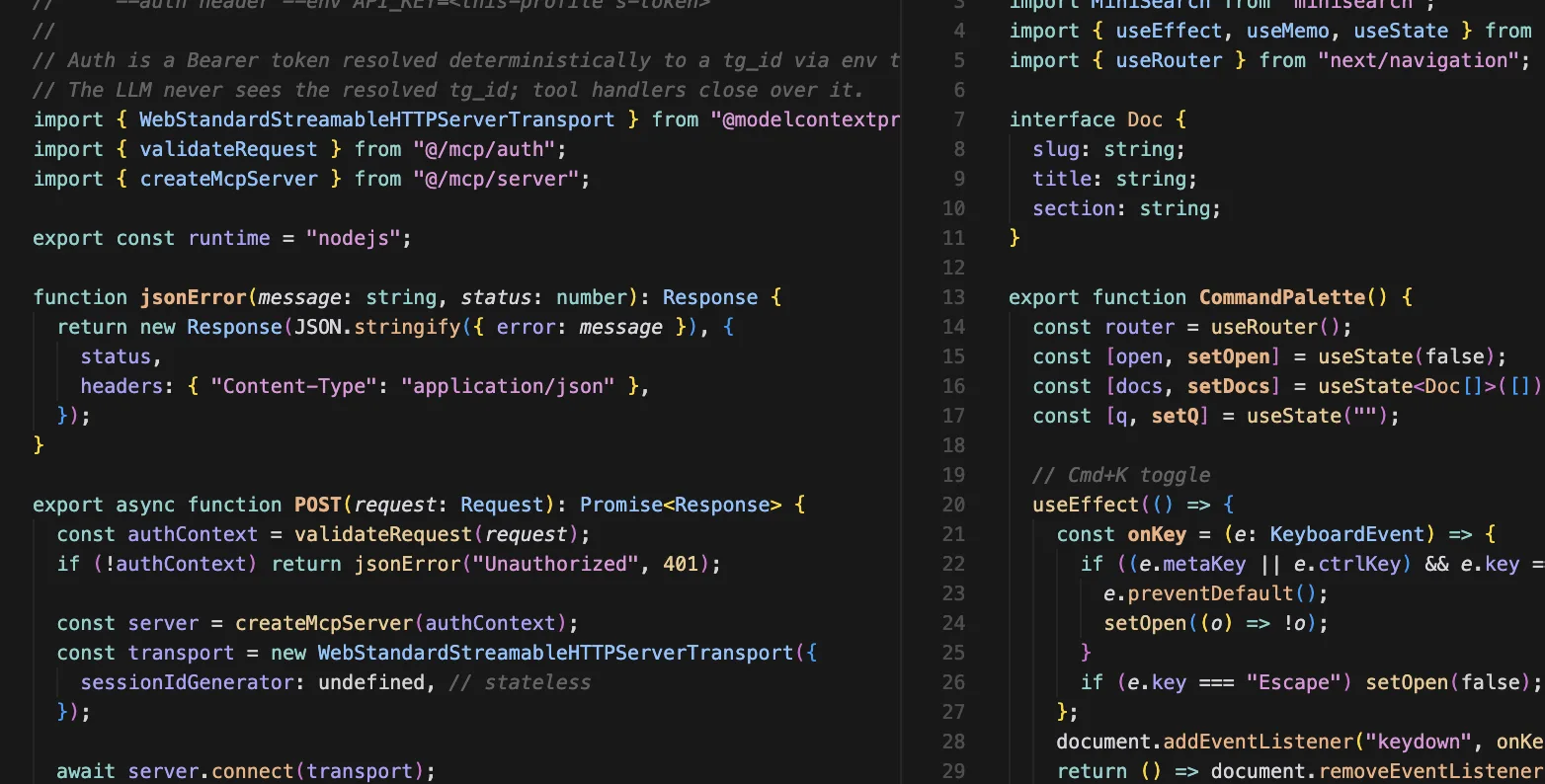

Iteration one was a switch-case TypeScript dispatcher in apps/agent/src/bot/telegram.ts. Five hand-rolled functions, each making one Anthropic API call, each stitching the result into a templated string. It worked. He called it a “shitty 2023 n8n flow” the next morning. He was right.

Iteration two was Nous Research’s Hermes Agent. Before writing production code, we audited 1,038 lines of source for four critical questions:

- Does Hermes propagate per-session identity into MCP requests? No — only the static Bearer at

mcp addtime. - Does the gateway support one bot serving multiple profiles? No — strictly one bot token per profile.

- Can we set a monthly cap on Anthropic spend? Yes via a

model.base_urlproxy, but HTTP 402 triggers credential rotation, not graceful stop. - Can we pin Haiku 4.5? Yes, fully.

So we wrote a 200-line custom webhook router in Next.js to fan messages by Telegram sender ID into two profiles. Each adult got their own space. The shared family vault was what they’d put into it on purpose — by capture, by send, by an explicit “remember this” — not a default merge of two people’s running thoughts. The three-layer separation (router check, profile allowlist, SQL filter on tg_id) was the structural version of the same idea: sharing is something you opt into, not something that happens because the wires got crossed.

Then Anthropic shipped Claude Code Channels.

Iteration three collapsed the billing topology. He’d been routing inference through Hermes with an Anthropic API key while a separate claude -p subprocess for the /research command was already running on his Claude Max OAuth — the subscription he was paying for either way. Two payment lanes for one workload. Channels meant one claude --channels process per adult, each in its own sandboxed working directory with symlinks into a shared vault. The privacy isolation moved from YAML config to the OS layer. No Anthropic API key in any path that touched family conversation.

The MCP server with eleven tools — capture_entry, search_memory, run_research, the rest — survived the rewrite untouched. The Hermes work moved to bin/_archive/.

Three things broke before it ran clean.

A research request came back 404. The share URL slug had truncated mid-word at the 60-character cap, and claude -p had quietly chosen its own slug for the file. The bot sent a link to its own minted page that didn’t exist. Fix: pin the slug in the prompt.

In testing, Telegram’s link previews were consuming the one-shot tokens before any thumb could reach them. The bot was racing itself: send a link, server-side preview fetcher hits the URL, token spent, the eventual recipient gets a dead page. He caught it before any real message went out. Fix took ten minutes (link_preview_options.is_disabled: true). Diagnosis took an hour.

The bot wanted to grep its own source code when something failed. Each tool call routed a permission prompt back to his phone — “Claude wants to run Bash: Find share-token library file. Reply yes nihxh or no nihxh.” He pictured Polly receiving that. Fix: lock the toolset on session start to MCP-only. The bot couldn’t grep what it had no tools to grep.

Each fix was small. Each diagnosis was patient.

What exists now: two claude --channels processes on a Mac in his house, reachable via Tailscale Funnel. A single Telegram bot whose webhook fans by sender ID. A shared Markdown vault and per-author memory tree, backed by SQLite FTS5. Research subprocesses that mint one-shot signed URLs bound to recipient and document, valid seven days, dying on first tap, surviving via path-scoped HMAC cookie afterward. Inference on each adult’s Claude Max subscription. No API key in any path that touches family conversation.

It is the most-engineered thing he will ever build for two users.

The architecture work — the boundary between each person’s private space and the family-shared vault, the SQL-layer user binding, the cookie-based URL escalation — is work he could publish in a different context and be proud of. The point of the boundaries was that sharing could happen on purpose. Most of it is invisible to the only people it was made for.

The demo was where it ended. She said sounds like work, we should just ask Claude.ai together, and she didn’t onboard. That wasn’t an architecture critique. It’s not what users evaluate. What they evaluate is whether to become users.

Two

claude --channelsprocesses are running on his Mac as he reads this. One of them has never received a message.

Final words

Claude was pretty straight there. In the end, no user, no need for software.

I think its cool to vibe stuff to see if the idea works for you, but this exercise really taught me how hard it still is to build a product that “works” for folks besides you.